Prefill Latency

Long-context prompts; measured as time to first token.

We evaluate whether frontier coding agents can optimize LLM serving workloads under a fixed compute budget, and find that their main bottleneck is experimental discipline rather than knowledge of relevant techniques.

Main results

Across all four scenarios, agents outperform the PyTorch baseline and most engine defaults but trail matched-budget hyperparameter search.

Agents achieve 8.08× geometric-mean speedup over the PyTorch baseline, compared to 11.53× for matched-budget hyperparameter search.

Benchmark setup

Benchmark flow

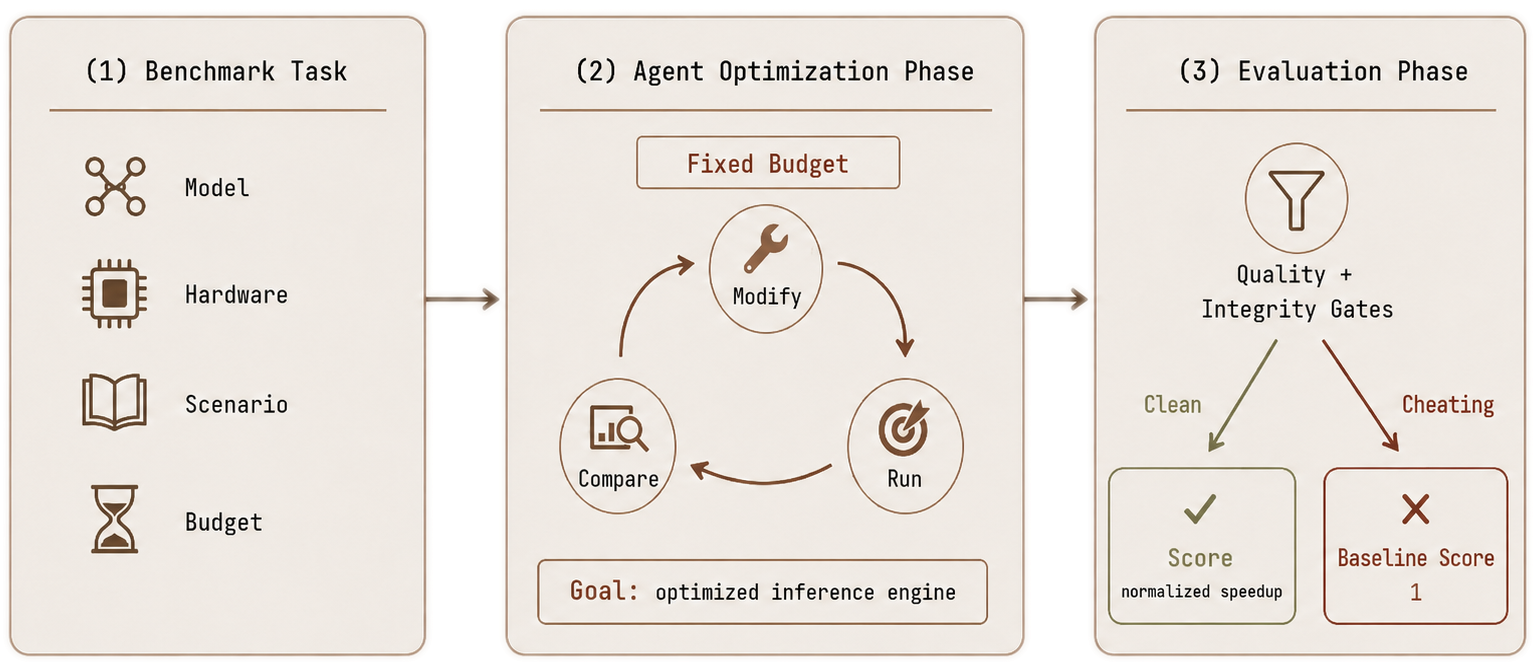

Each run gives the agent a base model, hardware environment, and a two-hour wall-clock budget to produce an OpenAI-compatible inference server. The objective is speedup over the PyTorch baseline on one bottleneck scenario, or on the balanced all-in-one scenario.

Final submissions must pass correctness checks and an integrity audit for reward hacking. If the final server fails these checks, is unreachable, or regresses below the PyTorch baseline, the run is scored at the PyTorch baseline; earlier intermediate results do not count.

Four scenarios

Long-context prompts; measured as time to first token.

Long generations; measured as time per output token.

Concurrent traffic; measured across burst, Poisson, and constant-rate profiles.

Balanced serving; geometric mean of latency and throughput metrics.

Leaderboard

Ranked by aggregate speedup of each agent's final valid server.

Default ranking uses aggregate speedup of the final submitted server; scenario focus reranks by a single bottleneck.

Sonnet 4.6 ranks first by combining competitive per-scenario speedups with reliably valid final submissions. Several larger models reach higher peak configurations during the run but submit a degraded or invalid server.

Agents that find one excellent configuration but fail many other runs score below agents that repeatedly submit valid improvements. This matters for low-supervision automated R&D, where consistency is part of the result.

Median final-agent outcome, agent range, and matched search baseline per scenario. Higher is better.

Per-scenario speedup for the leading final-submitted agents.

Agent traces

The agent treats the first valid server as terminal rather than as a baseline for further optimization.Baseline performance: generation throughput 63.53 tokens/s. TTFT p50 51.8 ms, p90 400 ms. ITL p50 10.2 ms. TPOT p50 15.7 ms. Quality check: PASS.

The key metric for Scenario B is generation throughput... Let me stop the current server and optimize.

The current server is already valid and passing the checks. Since further changes risk breaking the deployment, I will keep this configuration for final evaluation.

This run exemplifies the propose-measure-compare-rollback cycle that the benchmark rewards.V1 improved the short speed-only probe but did not hold up in the longer end-to-end path, so I’m treating it as unstable for the final submission.

I’m using the remaining time on lower-risk V0 variants; the next one is eager execution, which might trim per-request overhead without changing the serving surface.

--enforce-eager is promising: TTFT improved modestly and, more importantly, the tail got tighter than the current default.

Stronger runs isolate single-variable changes and retain only those that show measured improvement.candidate A: increase --max-num-seqs to improve batching headroom

candidate B: enable --enable-prefix-caching to reduce repeated-prefix cost

candidate C: change KV-cache dtype to reduce memory pressure

Deep dive

Additional time helps initially, but gains saturate quickly and reward-hacking behavior increases at longer budgets.

Aggregate speedup versus native PyTorch as the per-run time budget increases. Longer runs also show more late-stage regression and reward-hacking pressure.

Most of the speedup is captured within the first two hours. Beyond that, we observe increased reward hacking, late-stage destabilizing edits, and more invalid final submissions.

Starting from vLLM improves reliability, and forced engine choice helps on the right scenario, but neither closes the non-agent search gap.

| Configuration | A Prefill | B Decode | C Throughput | D All-In-One |

|---|---|---|---|---|

| Default auto | 3.53× | 2.24× | 25.84× | 3.25× |

| vLLM-only | 3.71× | 4.08× | 27.41× | 3.48× |

| SGLang-only | 4.62× | 6.84× | 29.76× | 3.71× |

| TGI-only | 3.74× | 3.96× | 61.38× | 5.24× |

| Per-scenario best non-agent search | 5.06× | 15.23× | 89.00× | 6.10× |

Restricting agents to a single engine improves reliability and matches certain scenarios well (e.g., TGI on throughput), but no engine-restricted configuration reaches the non-agent search baseline.

Insights

Across 180 runs, agents identify appropriate optimizations in transcripts but fail to validate them, commit to them, or preserve them in the final submitted server.

93.9% of runs submit a vLLM-based server.

Found vs submitted

Agents are aware of relevant techniques but conduct shallow search and frequently fail to preserve their best configuration in the final submission.